commit

c3f44fab85

2

LICENSE

2

LICENSE

|

|

@ -186,7 +186,7 @@

|

|||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright [yyyy] [name of copyright owner]

|

||||

Copyright [2023] [Leandro Antônio Farias Machado]

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

|

|

|

|||

213

README.en.md

213

README.en.md

|

|

@ -1,213 +0,0 @@

|

|||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/83298718/220207485-8c2aac78-95eb-4b43-b23e-c4bfa6cd30e6.png"/>

|

||||

</p>

|

||||

<br/>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Introduction:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

This repository aims to promote the development of a multi-vendor management platform for IoTs. Any device that follows the TR-369 protocol can be managed. The main objective is to facilitate and unify device management, which generates countless benefits for the end user and service providers, suppressing the demands that today's technologies require: device interconnection, data collection, speed, availability and much more. .

|

||||

</p>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>TR-069 ---> TR-369 :</h4>

|

||||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

The advent of the Internet of Things brings countless opportunities and challenges for service providers, with over a billion devices across the globe today making use of <a href="https://www.broadband-forum.org/download /TR-069_Amendment-2.pdf">TR-069</a>, what is the future of the protocol and what can we expect ahead?

|

||||

</p>

|

||||

<p>

|

||||

The CWMP (CPE Wan Management Protocol), better known as TR-069, opened many doors for the ecosystem of providers, through which it is possible to deliver services with agility, which meet or exceed customer expectations, with proactive management and secure network, also bearing in mind the lower cost and greater efficiency for service providers.

|

||||

</p>

|

||||

<p>

|

||||

With the rise of what we now call the smart home, the Internet of Things and the demand for increasingly interconnected and cloud-based environments, new demands and obstacles have emerged, opening the door to the creation of a new form of communication that meets the needs of current market needs.

|

||||

</p>

|

||||

<p>

|

||||

There is a fierce race to monetize the IoT devices that are now part of the connected home and other environments. As a result, many companies are creating their own proprietary solutions; this is understandable given such pressure generated by the promise of Smart Home monetization. Unfortunately, these applications contribute to a poor ecosystem, where a provider ends up dependent and limited to a vertical solution, from a single vendor. This generates an <b>low competition environment (which leads to greater risks), less innovation, and the potential for very high cost solutions</b>.

|

||||

</p>

|

||||

<p>

|

||||

The technologies behind Wi-Fi, device-to-device connectivity, the Smart Home and IoTs are constantly evolving and improving. It is important that when service providers look for a solution, they look for something that is "future proof", always thinking ahead.

|

||||

</p>

|

||||

<p>

|

||||

Seeking to solve the challenges mentioned above, providers and manufacturers together developed the USP (User Services Platform), defined by the Broadband Forum's TR-369 standard, which is the natural evolution of the TR-069. <b>This new standard is designed to be flexible, secure, scalable and standardized to meet the demands of a connected world today, and in the future.</b>

|

||||

</p>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Companies/Institutions involved in the creation of the TR-369:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Google

|

||||

</li>

|

||||

<li>

|

||||

Nokia

|

||||

</li>

|

||||

<li>

|

||||

Huawei

|

||||

</li>

|

||||

<li>

|

||||

Axiros

|

||||

</li>

|

||||

<li>

|

||||

Orange

|

||||

</li>

|

||||

<li>

|

||||

Commscope

|

||||

</li>

|

||||

<li>

|

||||

Assia

|

||||

</li>

|

||||

<li>

|

||||

AT&AT

|

||||

</li>

|

||||

<li>

|

||||

NEC

|

||||

</li>

|

||||

<li>

|

||||

Arris

|

||||

</li>

|

||||

<li>

|

||||

QA Cafe

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Topology:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<img src="https://usp.technology/specification/architecture/usp_architecture.png"/>

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Protocols:</h4>

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Notifications/Data collection:</h4>

|

||||

You can create notifications that fire on a value change, object creation and removal, complete operation, or an event.

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4><a href="https://github.com/BroadbandForum/obuspa">OB-USP-A</a> (Open Broadband User Services Platfrom Agent):</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Designed for embedded software (~400kb on ARM)

|

||||

</li>

|

||||

<li>

|

||||

Encoded in C

|

||||

</li>

|

||||

<li>

|

||||

License <a href="https://opensource.org/license/bsd-3-clause/">BSD 3-Clause</a>

|

||||

</li>

|

||||

<li>

|

||||

Made for Linux environments

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Data Analysis</h4>

|

||||

The protocol has a mechanism called "Bulk Data", where it is possible to collect large volumes of data from the device, the data can be collected by HTTP, or another telemetry MTP defined in the TR standard, this data can be in JSON, CSV format or XML. This generates the opportunity to use AI on top of this data, obtaining relevant information that can be used for different purposes, from predicting events, KPIs, information for the commercial area, but also for the best configuration of a device.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>WiFi:</h4>

|

||||

It has over 130 Wi-Fi configuration and diagnostics metrics, many of these settings and parameters are a trade-off between signal coverage area, latency and throughput. When deploying Wi-Fi systems, there is a tendency to maintain the same configuration on all clients, causing the technology to perform below expectations. Machine Learning combined with the data analysis mentioned in the previous topic makes it possible to automate the management and optimization of Wireless networks, where a big data approach is able to find the ideal configuration for each device.

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Commands:</h4>

|

||||

It is possible to perform commands remotely on the product, such as: firmware update, reboot, reset, search for neighboring networks, backup, ping, network diagnostics and many others.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>IoT:</h4>

|

||||

<div align="center">

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/a2a12d9d-05a0-428b-ba3f-1ad83c876301" width="90%"/>

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/91a87f43-3de7-42bd-a689-a4e14eecf5c0" width="60%"/>

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/73e2e360-d53e-494e-9a50-60c83dae75df" width="60%"/>

|

||||

<div>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Software Modules:</h4>

|

||||

Currently, telecommunications giants and startups, publishing new software daily, slow delivery cycles and manual and time-consuming quality assurance processes make it difficult for integrators and service providers to compete. USP "Software Module Management" allows a containerized approach to the development of software for embedded devices, making it possible to drastically reduce the chance of error in software updates, it also facilitates the integration of third parties in a device, still keeping the firmware part isolated from Vendor.

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/64664b0e-81cd-4a29-bbc5-b4186a04dfa2" width="50%"/>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

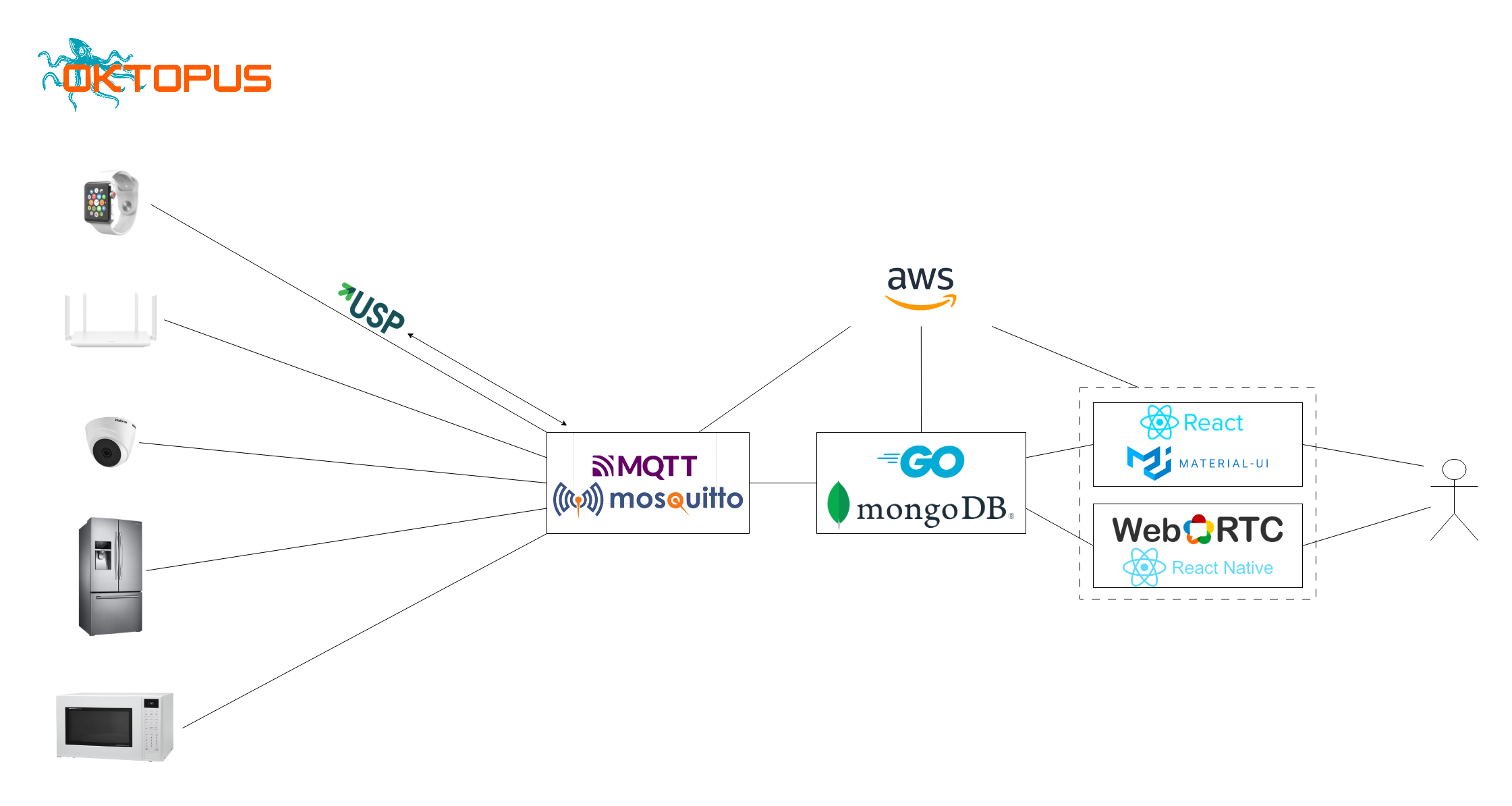

<ul><li><h4>Infrastructure:</h4></li></ul>

|

||||

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>API:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://documenter.getpostman.com/view/18932104/2s93eR3vQY#10c46751-ede9-4ea1-8ea4-264ebf539e5e">Documentation </a>

|

||||

</li>

|

||||

<li>

|

||||

<a href="https://www.postman.com/docking-module-astronomer-46169629/workspace/oktopus">Workspace of tests and development</a>

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Developer:</h4>

|

||||

Run app using Docker:

|

||||

<pre>

|

||||

leandro@leandro-laptop:~$ cd oktopus/devops

|

||||

leandro@leandro-laptop:~/oktopus/devops$ docker compose up

|

||||

</pre>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<p>Are you going to use our project in your company? would like to talk about TR-369 and IoT management, we're online on <a href="https://join.slack.com/t/oktopustr-369/shared_invite/zt-1znmrbr52-3AXgOlSeQTPQW8_Qhn3C4g">Slack</a>.</p>

|

||||

<p>If you are interested in internal information about the team and our intentions, visit our <a href="https://github.com/leandrofars/oktopus/wiki">Wiki</a>.</p>

|

||||

<br/>

|

||||

<p>Bibliographic sources: <a href="https://www.broadband-forum.org/download/MU-461.pdf">MU-461.pdf</a>, <a href="https:/ /usp.technology/specification/index.htm">TR-369.html</a>, <a href="https://drive.google.com/drive/folders/1N7FqK0PkDhjCN5s3OhQ_wmz9UcTSwRCX">USP Training Session Slides</usp.technology/specification/index.htm">TR-369.html</a> a></p>

|

||||

35

README.es.md

35

README.es.md

|

|

@ -1,35 +0,0 @@

|

|||

<p>Lea en otros idiomas: <a href="README.en.md">English</a>, <a href="README.md">Português</a></p><br/><br/>

|

||||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/83298718/220207485-8c2aac78-95eb-4b43-b23e-c4bfa6cd30e6.png"/>

|

||||

</p>

|

||||

<br/>

|

||||

<p>

|

||||

Este repositorio tiene como objetivo promover el desarrollo de una plataforma de gestión de múltiples proveedores para IoT. Se puede gestionar cualquier dispositivo que siga el protocolo TR-369. La forma de comunicación con el IoT escogida para el proyecto es MQTT. El emprendimiento tiene como principal objetivo facilitar y unificar la gestión de dispositivos, lo que genera innumerables beneficios para el usuario final y los proveedores de servicios, extingue viejos problemas en el área de las telecomunicaciones y suprime las exigencias que exigen las tecnologías actuales: interconexión de dispositivos, recolección de datos, velocidad, disponibilidad y mucho más.

|

||||

</p>

|

||||

<p>

|

||||

Con la creación de nuevas tecnologías, se hizo necesario crear una solución más robusta que cubriera una gama más amplia de nichos, por lo que surgió el TR-369, buscando llenar los vacíos de su antecesor (TR-069), que cumplió bien su propósito en el tiempo, pero ahora sufre una depreciación. Con eso en mente, buscamos brindar una alternativa a los integradores de TI, proveedores, administradores de sistemas y otros que puedan estar interesados.

|

||||

</p>

|

||||

<p>

|

||||

Esta solución se inspira en el proyecto. <a href="https://github.com/genieacs/genieacs">GenieACS</a> y otras soluciones comerciales que se encuentran en el mercado. Buscamos seguir estrictamente las especificaciones de USP TR-369, optando por utilizar MQTT en la capa MTP (Message Transfer Protocol).

|

||||

</p>

|

||||

<ul><li><h4>Infraestructura:</h4></li></ul>

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>API:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://documenter.getpostman.com/view/18932104/2s93eR3vQY#10c46751-ede9-4ea1-8ea4-264ebf539e5e">Documentación </a>

|

||||

</li>

|

||||

<li>

|

||||

<a href="https://www.postman.com/docking-module-astronomer-46169629/workspace/oktopus">Espacio de trabajo de pruebas y desarrollo</a>

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<br/>

|

||||

Si está interesado en información interna sobre el equipo y nuestras intenciones, visite nuestro <a href="https://github.com/leandrofars/oktopus/wiki">Wiki</a>.

|

||||

|

||||

74

README.md

74

README.md

|

|

@ -4,16 +4,16 @@

|

|||

<br/>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://github.com/leandrofars/oktopus/blob/main/README.en.md">Readme in English</a>

|

||||

<a href="https://github.com/leandrofars/oktopus/blob/main/README.pt-br.md">Readme em Português</a>

|

||||

</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Introdução:</h4>

|

||||

<h4>Introduction:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

Este repositório tem como intuito fomentar o desenvolvimento de uma plataforma de gerência multi-vendor para IoTs. Todo dispositivo que seguir o protocolo TR-369 poderá ser gerenciado. O objetivo principal é facilitar e unificar a gerência de dispositivos, o que gera inúmeros benefícios para o usuário final e prestadores de serviços, suprimindo as demandas que as tecnologias de hoje exigem: interconexão de dispositivos, coleta de dados, rápidez, disponibilidade e muito mais.

|

||||

This repository aims to promote the development of a multi-vendor management platform for IoTs. Any device that follows the TR-369 protocol can be managed. The main objective is to facilitate and unify device management, which generates countless benefits for the end user and service providers, suppressing the demands that today's technologies require: device interconnection, data collection, speed, availability and much more. .

|

||||

</p>

|

||||

<ul>

|

||||

<li>

|

||||

|

|

@ -21,27 +21,27 @@ Este repositório tem como intuito fomentar o desenvolvimento de uma plataforma

|

|||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

O advento da Internet das Coisas traz inúmeras oportunidades e desafios pra os prestadores de serviços, com mais de um bilhão de dispositivos espalhados pelo globo hoje, fazendo uso do <a href="https://www.broadband-forum.org/download/TR-069_Amendment-2.pdf">TR-069</a>, qual é o futuro do protocolo e o que podemos esperar pela frente?

|

||||

The advent of the Internet of Things brings countless opportunities and challenges for service providers, with over a billion devices across the globe today making use of <a href="https://www.broadband-forum.org/download /TR-069_Amendment-2.pdf">TR-069</a>, what is the future of the protocol and what can we expect ahead?

|

||||

</p>

|

||||

<p>

|

||||

O CWMP(CPE Wan Management Protocol), mais conhecido como TR-069, abriu muitas portas para o ecossistema de provedores, por meio dele é possível entregar serviços com agilidade, que servem ou ultrapassam as expectativas do cliente, fazendo uma gestão pró-ativa e segura da rede, tendo em vista também o menor custo e a maior eficiência para os prestadores de serviços.

|

||||

The CWMP (CPE Wan Management Protocol), better known as TR-069, opened many doors for the ecosystem of providers, through which it is possible to deliver services with agility, which meet or exceed customer expectations, with proactive management and secure network, also bearing in mind the lower cost and greater efficiency for service providers.

|

||||

</p>

|

||||

<p>

|

||||

Com a ascensão do que hoje chamamos de casa inteligente, a Internet das Coisas e a demanda por ambientes cada vez mais interconectadas e baseados em nuvem, novas demandas e obstáculos surgiram, abrindo a porta para a criação de uma nova forma de comunicação que supra as necessidades do mercado atual.

|

||||

With the rise of what we now call the smart home, the Internet of Things and the demand for increasingly interconnected and cloud-based environments, new demands and obstacles have emerged, opening the door to the creation of a new form of communication that meets the needs of current market needs.

|

||||

</p>

|

||||

<p>

|

||||

Existe uma corrida acirrada para monetizar os dispostivos IoT que hoje fazem parte da casa conectada e de outros ambientes. Como resultado disso, muitas empresas estão criando suas próprias soluções proprietárias; isso é compreensível dada tamanha pressão gerada pela promessa da monetização da Casa Inteligente. Infelizmente, essas aplicações contribuem para um ecossistema pobre, onde um provedor acaba dependente e limitado a uma solução vertical, de um único Vendor. Isso gera um <b>ambiente de pouca competição (o que leva a maiores riscos), menos inovação, e o potencial de soluções com custos muito elevados</b>.

|

||||

There is a fierce race to monetize the IoT devices that are now part of the connected home and other environments. As a result, many companies are creating their own proprietary solutions; this is understandable given such pressure generated by the promise of Smart Home monetization. Unfortunately, these applications contribute to a poor ecosystem, where a provider ends up dependent and limited to a vertical solution, from a single vendor. This generates an <b>low competition environment (which leads to greater risks), less innovation, and the potential for very high cost solutions</b>.

|

||||

</p>

|

||||

<p>

|

||||

As tecnologias por trás do Wi-Fi, a conectividade entre dispositivos, a Casa Inteligente e os IoTs estão em constante evolução e aprimoramento. É importante que quando os prestadores de serviços forem buscar uma solução, busquem por algo que seja a "prova de futuro", pensando sempre adiante.

|

||||

The technologies behind Wi-Fi, device-to-device connectivity, the Smart Home and IoTs are constantly evolving and improving. It is important that when service providers look for a solution, they look for something that is "future proof", always thinking ahead.

|

||||

</p>

|

||||

<p>

|

||||

Buscando resolver os desafios citados anteriormente, provedores e fabricantes juntos, desenvolveram o USP (User Services Platform), definido pela norma TR-369 da Broadband Forum, sendo este, a evolução natural do TR-069. <b>Este novo padrão foi desenhado para ser flexível, seguro, escalonável e padronizado, para atender as demandas de um mundo conectado hoje, e no futuro.</b>

|

||||

Seeking to solve the challenges mentioned above, providers and manufacturers together developed the USP (User Services Platform), defined by the Broadband Forum's TR-369 standard, which is the natural evolution of the TR-069. <b>This new standard is designed to be flexible, secure, scalable and standardized to meet the demands of a connected world today, and in the future.</b>

|

||||

</p>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Empresas/Instituições envolvidas na criação do TR-369:</h4>

|

||||

<h4>Companies/Institutions involved in the creation of the TR-369:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Google

|

||||

|

|

@ -84,7 +84,7 @@ Buscando resolver os desafios citados anteriormente, provedores e fabricantes ju

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Topologia:</h4>

|

||||

<h4>Topology:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

|

|

@ -96,7 +96,7 @@ Buscando resolver os desafios citados anteriormente, provedores e fabricantes ju

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Protocolos:</h4>

|

||||

<h4>Protocols:</h4>

|

||||

|

||||

|

||||

</li>

|

||||

|

|

@ -104,8 +104,8 @@ Buscando resolver os desafios citados anteriormente, provedores e fabricantes ju

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Notificações/Coleta de dados:</h4>

|

||||

É possível criar notificações que são disparadas em uma mudaça de valor, criação e remoção de objeto, operação completa, ou um evento.

|

||||

<h4>Notifications/Data collection:</h4>

|

||||

You can create notifications that fire on a value change, object creation and removal, complete operation, or an event.

|

||||

|

||||

|

||||

</li>

|

||||

|

|

@ -116,16 +116,16 @@ Buscando resolver os desafios citados anteriormente, provedores e fabricantes ju

|

|||

<h4><a href="https://github.com/BroadbandForum/obuspa">OB-USP-A</a> (Open Broadband User Services Platfrom Agent):</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Desenhado para software embarcado (~400kb em ARM)

|

||||

Designed for embedded software (~400kb on ARM)

|

||||

</li>

|

||||

<li>

|

||||

Codificado em C

|

||||

Encoded in C

|

||||

</li>

|

||||

<li>

|

||||

Licença <a href="https://opensource.org/license/bsd-3-clause/">3-Clause BSD</a>

|

||||

License <a href="https://opensource.org/license/bsd-3-clause/">BSD 3-Clause</a>

|

||||

</li>

|

||||

<li>

|

||||

Feito para ambientes linux

|

||||

Made for Linux environments

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

|

|

@ -133,15 +133,15 @@ Buscando resolver os desafios citados anteriormente, provedores e fabricantes ju

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Análise de Dados</h4>

|

||||

O protocolo possui um mecanismo chamado "Bulk Data", onde é possível recolher grandes volumes de dados do dispositivo, os dados podem ser recolhidos por HTTP, ou outro MTP de telemetria definido na norma do TR, esses dados podem estar em formato JSON, CSV ou XML. Isso gera a oportunidade de utilizar IA em cima desses dados, obtendo informações relevantes que podem ser usadas tendo diversas intenções, desde a predição de eventos, KPIs, informações para a área comercial, mas também para a melhor configuração de um dispositivo.

|

||||

<h4>Data Analysis</h4>

|

||||

The protocol has a mechanism called "Bulk Data", where it is possible to collect large volumes of data from the device, the data can be collected by HTTP, or another telemetry MTP defined in the TR standard, this data can be in JSON, CSV format or XML. This generates the opportunity to use AI on top of this data, obtaining relevant information that can be used for different purposes, from predicting events, KPIs, information for the commercial area, but also for the best configuration of a device.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Wi-Fi:</h4>

|

||||

Possui mais de 130 métricas de configuração e diagnóstico de Wi-Fi, muitas dessa configurações e parâmetros são uma troca entre área de cobertura do sinal, latência e throughput. Ao implantar sistemas Wi-Fi, tende-se a manter a mesma configuração em todos os clientes, fazendo com que a tecnologia tenha uma performance abaixo do esperado. A Machine Learning aliada à análise de dados citada no tópico anterior, torna possível automatizar o gerenciamento e a optimização de redes Wireless, onde uma abordagem de big data é capax de encontrar a configuração ideal para cada dispositivo.

|

||||

<h4>WiFi:</h4>

|

||||

It has over 130 Wi-Fi configuration and diagnostics metrics, many of these settings and parameters are a trade-off between signal coverage area, latency and throughput. When deploying Wi-Fi systems, there is a tendency to maintain the same configuration on all clients, causing the technology to perform below expectations. Machine Learning combined with the data analysis mentioned in the previous topic makes it possible to automate the management and optimization of Wireless networks, where a big data approach is able to find the ideal configuration for each device.

|

||||

|

||||

|

||||

</li>

|

||||

|

|

@ -149,8 +149,8 @@ Possui mais de 130 métricas de configuração e diagnóstico de Wi-Fi, muitas d

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Comandos:</h4>

|

||||

É possível realizar comandos remotamente no produto, como por exemplo: atualização de firmware, reboot, reset, busca de redes vizinhas, backup, ping, diagnósticos de rede e muitos outros.

|

||||

<h4>Commands:</h4>

|

||||

It is possible to perform commands remotely on the product, such as: firmware update, reboot, reset, search for neighboring networks, backup, ping, network diagnostics and many others.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

|

|

@ -169,8 +169,8 @@ Possui mais de 130 métricas de configuração e diagnóstico de Wi-Fi, muitas d

|

|||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Módulos de Software:</h4>

|

||||

Atualmente, gigantes das telecomunicações e startups, publicam software novo diariamente, ciclos de entrega lentos e processos de garantia de qualidade manuais e demorados, torna difícil a competição para integradores e prestadores de serviços. USP "Software Module Management" permite uma abordagem containerizada ao desenvolvimento de software de dispositivos embarcados, tornando possível diminuir drasticamente a chance de erro em atualições de software, também facilita a integração de terceiros em um dispositvo, manténdo ainda assim, isolada a parte de firmware do Vendor.

|

||||

<h4>Software Modules:</h4>

|

||||

Currently, telecommunications giants and startups, publishing new software daily, slow delivery cycles and manual and time-consuming quality assurance processes make it difficult for integrators and service providers to compete. USP "Software Module Management" allows a containerized approach to the development of software for embedded devices, making it possible to drastically reduce the chance of error in software updates, it also facilitates the integration of third parties in a device, still keeping the firmware part isolated from Vendor.

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/64664b0e-81cd-4a29-bbc5-b4186a04dfa2" width="50%"/>

|

||||

</li>

|

||||

|

|

@ -180,9 +180,9 @@ Atualmente, gigantes das telecomunicações e startups, publicam software novo d

|

|||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<ul><li><h4>Infraestrutura:</h4></li></ul>

|

||||

<ul><li><h4>Infrastructure:</h4></li></ul>

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

|

|

@ -190,18 +190,18 @@ Atualmente, gigantes das telecomunicações e startups, publicam software novo d

|

|||

<h4>API:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://documenter.getpostman.com/view/18932104/2s93eR3vQY#10c46751-ede9-4ea1-8ea4-264ebf539e5e">Documentação </a>

|

||||

<a href="https://documenter.getpostman.com/view/18932104/2s93eR3vQY#10c46751-ede9-4ea1-8ea4-264ebf539e5e">Documentation </a>

|

||||

</li>

|

||||

<li>

|

||||

<a href="https://www.postman.com/docking-module-astronomer-46169629/workspace/oktopus">Workspace de testes e desenvolvimento</a>

|

||||

<a href="https://www.postman.com/docking-module-astronomer-46169629/workspace/oktopus">Workspace of tests and development</a>

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Desenvolvimento:</h4>

|

||||

Subir aplicação utilizando Docker:

|

||||

<h4>Developer:</h4>

|

||||

Run app using Docker:

|

||||

<pre>

|

||||

leandro@leandro-laptop:~$ cd oktopus/devops

|

||||

leandro@leandro-laptop:~/oktopus/devops$ docker compose up

|

||||

|

|

@ -210,8 +210,10 @@ leandro@leandro-laptop:~/oktopus/devops$ docker compose up

|

|||

</ul>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

<p>Vai usar nosso projeto na sua empresa? gostaria de conversar sobre o TR-369 e gerenciamento de IoTs, estamos online no <a href="https://join.slack.com/t/oktopustr-369/shared_invite/zt-1znmrbr52-3AXgOlSeQTPQW8_Qhn3C4g">Slack</a>.</p>

|

||||

<p>Caso você tenha interesse em informações internas sobre o time e nossas pretensões acesse nossa <a href="https://github.com/leandrofars/oktopus/wiki">Wiki</a>.</p>

|

||||

<br/>

|

||||

<p>Fontes bibliográficas: <a href="https://www.broadband-forum.org/download/MU-461.pdf">MU-461.pdf</a>, <a href="https://usp.technology/specification/index.htm">TR-369.html</a>, <a href="https://drive.google.com/drive/folders/1N7FqK0PkDhjCN5s3OhQ_wmz9UcTSwRCX">USP Training Session Slides</a></p>

|

||||

|

||||

<p>Are you going to use our project in your company? would like to talk about TR-369 and IoT management, we're online on <a href="https://join.slack.com/t/oktopustr-369/shared_invite/zt-1znmrbr52-3AXgOlSeQTPQW8_Qhn3C4g">Slack</a>.</p>

|

||||

<p>If you are interested in internal information about the team and our intentions, visit our <a href="https://github.com/leandrofars/oktopus/wiki">Wiki</a>.</p>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<p>Bibliographic sources: <a href="https://www.broadband-forum.org/download/MU-461.pdf">MU-461.pdf</a>, <a href="https:/ /usp.technology/specification/index.htm">TR-369.html</a>, <a href="https://drive.google.com/drive/folders/1N7FqK0PkDhjCN5s3OhQ_wmz9UcTSwRCX">USP Training Session Slides</usp.technology/specification/index.htm">TR-369.html</a></p>

|

||||

|

|

|

|||

218

README.pt-br.md

Normal file

218

README.pt-br.md

Normal file

|

|

@ -0,0 +1,218 @@

|

|||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/83298718/220207485-8c2aac78-95eb-4b43-b23e-c4bfa6cd30e6.png"/>

|

||||

</p>

|

||||

<br/>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://github.com/leandrofars/oktopus/blob/main/README.en.md">Readme in English</a>

|

||||

</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Introdução:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

Este repositório tem como intuito fomentar o desenvolvimento de uma plataforma de gerência multi-vendor para IoTs. Todo dispositivo que seguir o protocolo TR-369 poderá ser gerenciado. O objetivo principal é facilitar e unificar a gerência de dispositivos, o que gera inúmeros benefícios para o usuário final e prestadores de serviços, suprimindo as demandas que as tecnologias de hoje exigem: interconexão de dispositivos, coleta de dados, rápidez, disponibilidade e muito mais.

|

||||

</p>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>TR-069 ---> TR-369 :</h4>

|

||||

</li>

|

||||

</ul>

|

||||

<p>

|

||||

O advento da Internet das Coisas traz inúmeras oportunidades e desafios pra os prestadores de serviços, com mais de um bilhão de dispositivos espalhados pelo globo hoje, fazendo uso do <a href="https://www.broadband-forum.org/download/TR-069_Amendment-2.pdf">TR-069</a>, qual é o futuro do protocolo e o que podemos esperar pela frente?

|

||||

</p>

|

||||

<p>

|

||||

O CWMP(CPE Wan Management Protocol), mais conhecido como TR-069, abriu muitas portas para o ecossistema de provedores, por meio dele é possível entregar serviços com agilidade, que servem ou ultrapassam as expectativas do cliente, fazendo uma gestão pró-ativa e segura da rede, tendo em vista também o menor custo e a maior eficiência para os prestadores de serviços.

|

||||

</p>

|

||||

<p>

|

||||

Com a ascensão do que hoje chamamos de casa inteligente, a Internet das Coisas e a demanda por ambientes cada vez mais interconectadas e baseados em nuvem, novas demandas e obstáculos surgiram, abrindo a porta para a criação de uma nova forma de comunicação que supra as necessidades do mercado atual.

|

||||

</p>

|

||||

<p>

|

||||

Existe uma corrida acirrada para monetizar os dispostivos IoT que hoje fazem parte da casa conectada e de outros ambientes. Como resultado disso, muitas empresas estão criando suas próprias soluções proprietárias; isso é compreensível dada tamanha pressão gerada pela promessa da monetização da Casa Inteligente. Infelizmente, essas aplicações contribuem para um ecossistema pobre, onde um provedor acaba dependente e limitado a uma solução vertical, de um único Vendor. Isso gera um <b>ambiente de pouca competição (o que leva a maiores riscos), menos inovação, e o potencial de soluções com custos muito elevados</b>.

|

||||

</p>

|

||||

<p>

|

||||

As tecnologias por trás do Wi-Fi, a conectividade entre dispositivos, a Casa Inteligente e os IoTs estão em constante evolução e aprimoramento. É importante que quando os prestadores de serviços forem buscar uma solução, busquem por algo que seja a "prova de futuro", pensando sempre adiante.

|

||||

</p>

|

||||

<p>

|

||||

Buscando resolver os desafios citados anteriormente, provedores e fabricantes juntos, desenvolveram o USP (User Services Platform), definido pela norma TR-369 da Broadband Forum, sendo este, a evolução natural do TR-069. <b>Este novo padrão foi desenhado para ser flexível, seguro, escalonável e padronizado, para atender as demandas de um mundo conectado hoje, e no futuro.</b>

|

||||

</p>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Empresas/Instituições envolvidas na criação do TR-369:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Google

|

||||

</li>

|

||||

<li>

|

||||

Nokia

|

||||

</li>

|

||||

<li>

|

||||

Huawei

|

||||

</li>

|

||||

<li>

|

||||

Axiros

|

||||

</li>

|

||||

<li>

|

||||

Orange

|

||||

</li>

|

||||

<li>

|

||||

Commscope

|

||||

</li>

|

||||

<li>

|

||||

Assia

|

||||

</li>

|

||||

<li>

|

||||

AT&AT

|

||||

</li>

|

||||

<li>

|

||||

NEC

|

||||

</li>

|

||||

<li>

|

||||

Arris

|

||||

</li>

|

||||

<li>

|

||||

QA Cafe

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Topologia:</h4>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<img src="https://usp.technology/specification/architecture/usp_architecture.png"/>

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Protocolos:</h4>

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Notificações/Coleta de dados:</h4>

|

||||

É possível criar notificações que são disparadas em uma mudaça de valor, criação e remoção de objeto, operação completa, ou um evento.

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4><a href="https://github.com/BroadbandForum/obuspa">OB-USP-A</a> (Open Broadband User Services Platfrom Agent):</h4>

|

||||

<ul>

|

||||

<li>

|

||||

Desenhado para software embarcado (~400kb em ARM)

|

||||

</li>

|

||||

<li>

|

||||

Codificado em C

|

||||

</li>

|

||||

<li>

|

||||

Licença <a href="https://opensource.org/license/bsd-3-clause/">3-Clause BSD</a>

|

||||

</li>

|

||||

<li>

|

||||

Feito para ambientes linux

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Análise de Dados</h4>

|

||||

O protocolo possui um mecanismo chamado "Bulk Data", onde é possível recolher grandes volumes de dados do dispositivo, os dados podem ser recolhidos por HTTP, ou outro MTP de telemetria definido na norma do TR, esses dados podem estar em formato JSON, CSV ou XML. Isso gera a oportunidade de utilizar IA em cima desses dados, obtendo informações relevantes que podem ser usadas tendo diversas intenções, desde a predição de eventos, KPIs, informações para a área comercial, mas também para a melhor configuração de um dispositivo.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Wi-Fi:</h4>

|

||||

Possui mais de 130 métricas de configuração e diagnóstico de Wi-Fi, muitas dessa configurações e parâmetros são uma troca entre área de cobertura do sinal, latência e throughput. Ao implantar sistemas Wi-Fi, tende-se a manter a mesma configuração em todos os clientes, fazendo com que a tecnologia tenha uma performance abaixo do esperado. A Machine Learning aliada à análise de dados citada no tópico anterior, torna possível automatizar o gerenciamento e a optimização de redes Wireless, onde uma abordagem de big data é capax de encontrar a configuração ideal para cada dispositivo.

|

||||

|

||||

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Comandos:</h4>

|

||||

É possível realizar comandos remotamente no produto, como por exemplo: atualização de firmware, reboot, reset, busca de redes vizinhas, backup, ping, diagnósticos de rede e muitos outros.

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>IoT:</h4>

|

||||

<div align="center">

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/a2a12d9d-05a0-428b-ba3f-1ad83c876301" width="90%"/>

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/91a87f43-3de7-42bd-a689-a4e14eecf5c0" width="60%"/>

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/73e2e360-d53e-494e-9a50-60c83dae75df" width="60%"/>

|

||||

<div>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Módulos de Software:</h4>

|

||||

Atualmente, gigantes das telecomunicações e startups, publicam software novo diariamente, ciclos de entrega lentos e processos de garantia de qualidade manuais e demorados, torna difícil a competição para integradores e prestadores de serviços. USP "Software Module Management" permite uma abordagem containerizada ao desenvolvimento de software de dispositivos embarcados, tornando possível diminuir drasticamente a chance de erro em atualições de software, também facilita a integração de terceiros em um dispositvo, manténdo ainda assim, isolada a parte de firmware do Vendor.

|

||||

<br/>

|

||||

<img src="https://github.com/leandrofars/oktopus/assets/83298718/64664b0e-81cd-4a29-bbc5-b4186a04dfa2" width="50%"/>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<ul><li><h4>Infraestrutura:</h4></li></ul>

|

||||

|

||||

|

||||

|

||||

<ul>

|

||||

<li>

|

||||

<h4>API:</h4>

|

||||

<ul>

|

||||

<li>

|

||||

<a href="https://documenter.getpostman.com/view/18932104/2s93eR3vQY#10c46751-ede9-4ea1-8ea4-264ebf539e5e">Documentação </a>

|

||||

</li>

|

||||

<li>

|

||||

<a href="https://www.postman.com/docking-module-astronomer-46169629/workspace/oktopus">Workspace de testes e desenvolvimento</a>

|

||||

</li>

|

||||

</ul>

|

||||

</li>

|

||||

</ul>

|

||||

<ul>

|

||||

<li>

|

||||

<h4>Desenvolvimento:</h4>

|

||||

Subir aplicação utilizando Docker:

|

||||

<pre>

|

||||

leandro@leandro-laptop:~$ cd oktopus/devops

|

||||

leandro@leandro-laptop:~/oktopus/devops$ docker compose up

|

||||

</pre>

|

||||

</li>

|

||||

</ul>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

<p>Vai usar nosso projeto na sua empresa? gostaria de conversar sobre o TR-369 e gerenciamento de IoTs, estamos online no <a href="https://join.slack.com/t/oktopustr-369/shared_invite/zt-1znmrbr52-3AXgOlSeQTPQW8_Qhn3C4g">Slack</a>.</p>

|

||||

<p>Caso você tenha interesse em informações internas sobre o time e nossas pretensões acesse nossa <a href="https://github.com/leandrofars/oktopus/wiki">Wiki</a>.</p>

|

||||

|

||||

--------------------------------------------------------------------------------------------------------------------------------------------------------

|

||||

|

||||

<p>Fontes bibliográficas: <a href="https://www.broadband-forum.org/download/MU-461.pdf">MU-461.pdf</a>, <a href="https://usp.technology/specification/index.htm">TR-369.html</a>, <a href="https://drive.google.com/drive/folders/1N7FqK0PkDhjCN5s3OhQ_wmz9UcTSwRCX">USP Training Session Slides</a></p>

|

||||

|

||||

|

|

@ -45,8 +45,7 @@ func main() {

|

|||

|

||||

log.Println("Starting Oktopus Project TR-369 Controller Version:", VERSION)

|

||||

// fl_endpointId := flag.String("endpoint_id", "proto::oktopus-controller", "Defines the enpoint id the Agent must trust on.")

|

||||

flDevicesTopic := flag.String("d", "oktopus/devices", "That's the topic mqtt broker end new devices info.")

|

||||

flDisconTopic := flag.String("dis", "oktopus/disconnect", "It's where disconnected IoTs are known.")

|

||||

flDevicesTopic := flag.String("d", "oktopus/+/status/+", "That's the topic mqtt broker end new devices info.")

|

||||

flSubTopic := flag.String("sub", "oktopus/+/controller/+", "That's the topic agent must publish to, and the controller keeps on listening.")

|

||||

flBrokerAddr := flag.String("a", "localhost", "Mqtt broker adrress")

|

||||

flBrokerPort := flag.String("p", "1883", "Mqtt broker port")

|

||||

|

|

@ -54,7 +53,7 @@ func main() {

|

|||

flBrokerUsername := flag.String("u", "", "Mqtt broker username")

|

||||

flBrokerPassword := flag.String("P", "", "Mqtt broker password")

|

||||

flBrokerClientId := flag.String("i", "", "A clientid for the Mqtt connection")

|

||||

flBrokerQos := flag.Int("q", 2, "Quality of service of mqtt messages delivery")

|

||||

flBrokerQos := flag.Int("q", 0, "Quality of service of mqtt messages delivery")

|

||||

flAddrDB := flag.String("mongo", "mongodb://localhost:27017/", "MongoDB URI")

|

||||

flApiPort := flag.String("ap", "8000", "Rest api port")

|

||||

flHelp := flag.Bool("help", false, "Help")

|

||||

|

|

@ -86,7 +85,6 @@ func main() {

|

|||

QoS: *flBrokerQos,

|

||||

SubTopic: *flSubTopic,

|

||||

DevicesTopic: *flDevicesTopic,

|

||||

DisconnectTopic: *flDisconTopic,

|

||||

TLS: *flTlsCert,

|

||||

DB: database,

|

||||

MsgQueue: apiMsgQueue,

|

||||

|

|

|

|||

|

|

@ -2,6 +2,13 @@ package api

|

|||

|

||||

import (

|

||||

"encoding/json"

|

||||

"log"

|

||||

"net/http"

|

||||

"strconv"

|

||||

"strings"

|

||||

"sync"

|

||||

"time"

|

||||

|

||||

"github.com/gorilla/mux"

|

||||

"github.com/leandrofars/oktopus/internal/api/auth"

|

||||

"github.com/leandrofars/oktopus/internal/api/cors"

|

||||

|

|

@ -12,10 +19,6 @@ import (

|

|||

"github.com/leandrofars/oktopus/internal/utils"

|

||||

"go.mongodb.org/mongo-driver/mongo"

|

||||

"google.golang.org/protobuf/proto"

|

||||

"log"

|

||||

"net/http"

|

||||

"sync"

|

||||

"time"

|

||||

)

|

||||

|

||||

type Api struct {

|

||||

|

|

@ -26,6 +29,19 @@ type Api struct {

|

|||

QMutex *sync.Mutex

|

||||

}

|

||||

|

||||

type WiFi struct {

|

||||

SSID string `json:"ssid"`

|

||||

Password string `json:"password"`

|

||||

Security string `json:"security"`

|

||||

SecurityCapabilities []string `json:"securityCapabilities"`

|

||||

AutoChannelEnable bool `json:"autoChannelEnable"`

|

||||

Channel int `json:"channel"`

|

||||

ChannelBandwidth string `json:"channelBandwidth"`

|

||||

FrequencyBand string `json:"frequencyBand"`

|

||||

//PossibleChannels []int `json:"PossibleChannels"`

|

||||

SupportedChannelBandwidths []string `json:"supportedChannelBandwidths"`

|

||||

}

|

||||

|

||||

const (

|

||||

NormalUser = iota

|

||||

AdminUser

|

||||

|

|

@ -50,9 +66,8 @@ func StartApi(a Api) {

|

|||

authentication := r.PathPrefix("/api/auth").Subrouter()

|

||||

authentication.HandleFunc("/login", a.generateToken).Methods("PUT")

|

||||

authentication.HandleFunc("/register", a.registerUser).Methods("POST")

|

||||

// Keep the line above commented to avoid people get unintended admin privileges.

|

||||

// Uncomment it only once for you to get admin privileges and create new users.

|

||||

//authentication.HandleFunc("/admin/register", a.registerAdminUser).Methods("POST")

|

||||

authentication.HandleFunc("/admin/register", a.registerAdminUser).Methods("POST")

|

||||

authentication.HandleFunc("/admin/exists", a.adminUserExists).Methods("GET")

|

||||

iot := r.PathPrefix("/api/device").Subrouter()

|

||||

iot.HandleFunc("", a.retrieveDevices).Methods("GET")

|

||||

iot.HandleFunc("/{sn}/get", a.deviceGetMsg).Methods("PUT")

|

||||

|

|

@ -62,6 +77,7 @@ func StartApi(a Api) {

|

|||

iot.HandleFunc("/{sn}/parameters", a.deviceGetSupportedParametersMsg).Methods("PUT")

|

||||

iot.HandleFunc("/{sn}/instances", a.deviceGetParameterInstances).Methods("PUT")

|

||||

iot.HandleFunc("/{sn}/update", a.deviceFwUpdate).Methods("PUT")

|

||||

iot.HandleFunc("/{sn}/wifi", a.deviceWifi).Methods("PUT", "GET")

|

||||

|

||||

// Middleware for requests which requires user to be authenticated

|

||||

iot.Use(func(handler http.Handler) http.Handler {

|

||||

|

|

@ -171,7 +187,7 @@ func (a *Api) deviceFwUpdate(w http.ResponseWriter, r *http.Request) {

|

|||

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

var getMsgAnswer *usp_msg.GetResp

|

||||

|

||||

|

|

@ -231,7 +247,7 @@ func (a *Api) deviceFwUpdate(w http.ResponseWriter, r *http.Request) {

|

|||

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -250,6 +266,134 @@ func (a *Api) deviceFwUpdate(w http.ResponseWriter, r *http.Request) {

|

|||

}

|

||||

}

|

||||

|

||||

func (a *Api) deviceWifi(w http.ResponseWriter, r *http.Request) {

|

||||

vars := mux.Vars(r)

|

||||

sn := vars["sn"]

|

||||

a.deviceExists(sn, w)

|

||||

|

||||

if r.Method == http.MethodGet {

|

||||

msg := utils.NewGetMsg(usp_msg.Get{

|

||||

ParamPaths: []string{

|

||||

"Device.WiFi.SSID.[Enable==true].SSID",

|

||||

//"Device.WiFi.AccessPoint.[Enable==true].SSIDReference",

|

||||

"Device.WiFi.AccessPoint.[Enable==true].Security.ModeEnabled",

|

||||

"Device.WiFi.AccessPoint.[Enable==true].Security.ModesSupported",

|

||||

//"Device.WiFi.EndPoint.[Enable==true].",

|

||||

"Device.WiFi.Radio.[Enable==true].AutoChannelEnable",

|

||||

"Device.WiFi.Radio.[Enable==true].Channel",

|

||||

"Device.WiFi.Radio.[Enable==true].CurrentOperatingChannelBandwidth",

|

||||

"Device.WiFi.Radio.[Enable==true].OperatingFrequencyBand",

|

||||

//"Device.WiFi.Radio.[Enable==true].PossibleChannels",

|

||||

"Device.WiFi.Radio.[Enable==true].SupportedOperatingChannelBandwidths",

|

||||

},

|

||||

MaxDepth: 2,

|

||||

})

|

||||

|

||||

encodedMsg, err := proto.Marshal(&msg)

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

w.WriteHeader(http.StatusBadRequest)

|

||||

return

|

||||

}

|

||||

|

||||

record := utils.NewUspRecord(encodedMsg, sn)

|

||||

tr369Message, err := proto.Marshal(&record)

|

||||

if err != nil {

|

||||

log.Fatalln("Failed to encode tr369 record:", err)

|

||||

}

|

||||

|

||||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

//TODO: verify in protocol and in other models, the Device.Wifi parameters. Maybe in the future, to use SSIDReference from AccessPoint

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

log.Printf("Received Msg: %s", msg.Header.MsgId)

|

||||

delete(a.MsgQueue, msg.Header.MsgId)

|

||||

log.Println("requests queue:", a.MsgQueue)

|

||||

answer := msg.Body.GetResponse().GetGetResp()

|

||||

|

||||

var wifi [2]WiFi

|

||||

|

||||

//TODO: better algorithm, might use something faster an more reliable

|

||||

//TODO: full fill the commented wifi resources

|

||||

for _, x := range answer.ReqPathResults {

|

||||

if x.RequestedPath == "Device.WiFi.SSID.[Enable==true].SSID" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].SSID = y.ResultParams["SSID"]

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.AccessPoint.[Enable==true].Security.ModeEnabled" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].Security = y.ResultParams["Security.ModeEnabled"]

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.AccessPoint.[Enable==true].Security.ModesSupported" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].SecurityCapabilities = strings.Split(y.ResultParams["Security.ModesSupported"], ",")

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.Radio.[Enable==true].AutoChannelEnable" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

autoChannel, err := strconv.ParseBool(y.ResultParams["AutoChannelEnable"])

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

wifi[i].AutoChannelEnable = false

|

||||

} else {

|

||||

wifi[i].AutoChannelEnable = autoChannel

|

||||

}

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.Radio.[Enable==true].Channel" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

channel, err := strconv.Atoi(y.ResultParams["Channel"])

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

wifi[i].Channel = -1

|

||||

} else {

|

||||

wifi[i].Channel = channel

|

||||

}

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.Radio.[Enable==true].CurrentOperatingChannelBandwidth" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].ChannelBandwidth = y.ResultParams["CurrentOperatingChannelBandwidth"]

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.Radio.[Enable==true].OperatingFrequencyBand" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].FrequencyBand = y.ResultParams["OperatingFrequencyBand"]

|

||||

}

|

||||

continue

|

||||

}

|

||||

if x.RequestedPath == "Device.WiFi.Radio.[Enable==true].SupportedOperatingChannelBandwidths" {

|

||||

for i, y := range x.ResolvedPathResults {

|

||||

wifi[i].SupportedChannelBandwidths = strings.Split(y.ResultParams["SupportedOperatingChannelBandwidths"], ",")

|

||||

}

|

||||

continue

|

||||

}

|

||||

}

|

||||

json.NewEncoder(w).Encode(&wifi)

|

||||

return

|

||||

case <-time.After(time.Second * 45):

|

||||

log.Printf("Request %s Timed Out", msg.Header.MsgId)

|

||||

w.WriteHeader(http.StatusGatewayTimeout)

|

||||

delete(a.MsgQueue, msg.Header.MsgId)

|

||||

log.Println("requests queue:", a.MsgQueue)

|

||||

json.NewEncoder(w).Encode("Request Timed Out")

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (a *Api) deviceGetParameterInstances(w http.ResponseWriter, r *http.Request) {

|

||||

vars := mux.Vars(r)

|

||||

sn := vars["sn"]

|

||||

|

|

@ -281,7 +425,7 @@ func (a *Api) deviceGetParameterInstances(w http.ResponseWriter, r *http.Request

|

|||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -331,7 +475,7 @@ func (a *Api) deviceGetSupportedParametersMsg(w http.ResponseWriter, r *http.Req

|

|||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -381,7 +525,7 @@ func (a *Api) deviceCreateMsg(w http.ResponseWriter, r *http.Request) {

|

|||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -432,7 +576,7 @@ func (a *Api) deviceGetMsg(w http.ResponseWriter, r *http.Request) {

|

|||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -482,7 +626,7 @@ func (a *Api) deviceDeleteMsg(w http.ResponseWriter, r *http.Request) {

|

|||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -532,7 +676,7 @@ func (a *Api) deviceUpdateMsg(w http.ResponseWriter, r *http.Request) {

|

|||

//a.Broker.Request(tr369Message, usp_msg.Header_GET, "oktopus/v1/agent/"+sn, "oktopus/v1/get/"+sn)

|

||||

a.MsgQueue[msg.Header.MsgId] = make(chan usp_msg.Msg)

|

||||

log.Println("Sending Msg:", msg.Header.MsgId)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn)

|

||||

a.Broker.Publish(tr369Message, "oktopus/v1/agent/"+sn, "oktopus/v1/api/"+sn, false)

|

||||

|

||||

select {

|

||||

case msg := <-a.MsgQueue[msg.Header.MsgId]:

|

||||

|

|

@ -613,6 +757,20 @@ func (a *Api) registerAdminUser(w http.ResponseWriter, r *http.Request) {

|

|||

return

|

||||

}

|

||||

|

||||

users, err := a.Db.FindAllUsers()

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

w.WriteHeader(http.StatusInternalServerError)

|

||||

return

|

||||

}

|

||||

adminExists := adminUserExists(users)

|

||||

if adminExists {

|

||||

log.Println("There might exist only one admin")

|

||||

w.WriteHeader(http.StatusBadRequest)

|

||||

json.NewEncoder(w).Encode("There might exist only one admin")

|

||||

return

|

||||

}

|

||||

|

||||

user.Level = AdminUser

|

||||

|

||||

if err := user.HashPassword(user.Password); err != nil {

|

||||

|

|

@ -626,6 +784,29 @@ func (a *Api) registerAdminUser(w http.ResponseWriter, r *http.Request) {

|

|||

}

|

||||

}

|

||||

|

||||

func adminUserExists(users []map[string]interface{}) bool {

|

||||

for _, x := range users {

|

||||

if x["level"].(int32) == AdminUser {

|

||||

log.Println("Admin exists")

|

||||

return true

|

||||

}

|

||||

}

|

||||

return false

|

||||

}

|

||||

|

||||

func (a *Api) adminUserExists(w http.ResponseWriter, r *http.Request) {

|

||||

|

||||

users, err := a.Db.FindAllUsers()

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

w.WriteHeader(http.StatusInternalServerError)

|

||||

return

|

||||

}

|

||||

adminExits := adminUserExists(users)

|

||||

json.NewEncoder(w).Encode(adminExits)

|

||||

return

|

||||

}

|

||||

|

||||

type TokenRequest struct {

|

||||

Email string `json:"email"`

|

||||

Password string `json:"password"`

|

||||

|

|

|

|||

|

|

@ -2,7 +2,7 @@ package mqtt

|

|||

|

||||

import (

|

||||

"context"

|

||||

"crypto/tls"

|

||||

"github.com/eclipse/paho.golang/autopaho"

|

||||

"github.com/eclipse/paho.golang/paho"

|

||||

"github.com/leandrofars/oktopus/internal/db"

|

||||

usp_msg "github.com/leandrofars/oktopus/internal/usp_message"

|

||||

|

|

@ -10,8 +10,8 @@ import (

|

|||

"github.com/leandrofars/oktopus/internal/utils"

|

||||

"google.golang.org/protobuf/proto"

|

||||

"log"

|

||||

"net"

|

||||

"os"

|

||||

"net/url"

|

||||

"strconv"

|

||||

"strings"

|

||||

"sync"

|

||||

"time"

|

||||

|

|

@ -27,43 +27,65 @@ type Mqtt struct {

|

|||

QoS int

|

||||

SubTopic string

|

||||

DevicesTopic string

|

||||

DisconnectTopic string

|

||||

TLS bool

|

||||

DB db.Database

|

||||

MsgQueue map[string](chan usp_msg.Msg)

|

||||

QMutex *sync.Mutex

|

||||

}

|

||||

|

||||

var c *paho.Client

|

||||

const (

|

||||

ONLINE = iota

|

||||

OFFLINE

|

||||

)

|

||||

|

||||

var c *autopaho.ConnectionManager

|

||||

|

||||

/* ------------------- Implementations of broker interface ------------------ */

|

||||

|

||||

func (m *Mqtt) Connect() {

|

||||

devices := make(chan *paho.Publish)

|

||||

|

||||

broker, _ := url.Parse("tcp://" + m.Addr + ":" + m.Port)

|

||||

|

||||

status := make(chan *paho.Publish)

|

||||

controller := make(chan *paho.Publish)

|

||||

disconnect := make(chan *paho.Publish)

|

||||

apiMsg := make(chan *paho.Publish)

|

||||

go m.messageHandler(devices, controller, disconnect, apiMsg)

|

||||

clientConfig := m.startClient(devices, controller, disconnect, apiMsg)

|

||||

connParameters := startConnection(m.Id, m.User, m.Passwd)

|

||||

|

||||

conn, err := clientConfig.Connect(m.Ctx, &connParameters)

|

||||

if err != nil {

|

||||

log.Println(err)

|

||||

}

|

||||

if conn.ReasonCode != 0 {

|

||||

log.Fatalf("Failed to connect to %s : %d - %s", m.Addr+m.Port, conn.ReasonCode, conn.Properties.ReasonString)

|

||||

}

|

||||

|

||||

// Sets global client to be used by other mqtt functions

|

||||

c = clientConfig

|

||||

go m.messageHandler(status, controller, apiMsg)

|

||||

pahoClientConfig := m.buildClientConfig(status, controller, apiMsg)

|

||||

|

||||

autopahoClientConfig := autopaho.ClientConfig{

|

||||

BrokerUrls: []*url.URL{broker},

|

||||

KeepAlive: 30,

|

||||

ConnectRetryDelay: 5 * time.Second,

|

||||

ConnectTimeout: 5 * time.Second,

|

||||

OnConnectionUp: func(cm *autopaho.ConnectionManager, connAck *paho.Connack) {

|

||||

log.Printf("Connected to broker--> %s:%s", m.Addr, m.Port)

|

||||

m.Subscribe()

|

||||

},

|

||||

OnConnectError: func(err error) {

|

||||

log.Printf("Error while attempting connection: %s\n", err)

|

||||

},

|

||||

ClientConfig: *pahoClientConfig,

|

||||

}

|

||||

|

||||

if m.User != "" && m.Passwd != "" {

|

||||

autopahoClientConfig.SetUsernamePassword(m.User, []byte(m.Passwd))

|

||||

}

|

||||

|

||||

log.Println("MQTT client id:", pahoClientConfig.ClientID)

|

||||